Enterprise Data Platform with Databricks Part 5

Introduction

Welcome back to our blog series on building modern enterprise data platforms!

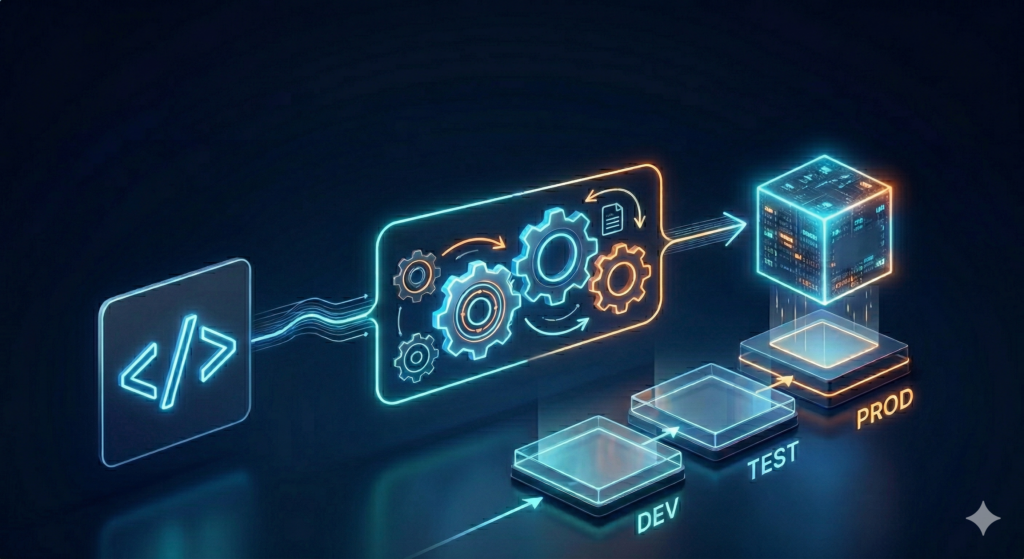

After developing the scripts and dbt models directly within the Databricks interactive environment and testing them against real data, we migrate these artifacts to a professional deployment structure. To do this, we use Databricks Asset Bundles (DABs), a tool that adapts software development best practices for data projects. DABs are used to bundle notebooks, transformations, job definitions, and all environment configurations for Databricks into a single deployment package.

Visualization created with the help of AI (Gemini)

Databricks Asset Bundles

The CI process is based on using GitHub as a central repository for all project artifacts: from PySpark ingestion scripts to the finished dbt Gold model. As soon as the code is pushed to GitHub, GitHub Actions takes over the role of orchestrating the CI/CD pipeline. The goal is to perform automated tests and validations before the code is deployed to the Databricks environment. The following tests are executed:

- Validating the PySpark code: When making changes to ingestion scripts, we use unit tests to ensure that critical logic components - such as API calls - continue to function as expected. We also verify that formatting guidelines and common programming standards have been followed.

- dbt-Tests: The pipeline first runs a dbt compile , which ensures that all SQL references can be resolved correctly and that there are no syntax errors in the models. After that, using the dbt run –select my_changed_model+ and dbt test –select my_changed_model+ command executes all downstream-dependent models and their associated tests. This process performs both generic technical tests, such as checking for unique primary keys (unique) or the absence of null values (not_null), as well as specific business tests using SQL statements defined specifically for this purpose.

Only after all PySpark and dbt tests in the pipeline have run successfully does the final step of the CI pipeline take place. We use Databricks Asset Bundles (DABs) to automatically and consistently deploy the entire state of our project to the Databricks environment. Instead of configuring files, scripts, and resources in the GUI, the entire project configuration and infrastructure are defined in a central databricks.yml configuration file. This approach offers us several key advantages:

- Full automation & validation: The command databricks bundle validate checks the entire project, including the configuration file, for syntax errors, correctness, and completeness. After successful validation, the databricks bundle deploy command is used to deploy the entire package to the target environment via the GitHub Action.

- Environmental independence: Using DABs, we can control deployments to different environments such as dev, test, or prod. In the DAB’s configuration file, we define environment-specific variables for this purpose, which are then correctly mapped to the appropriate environment during deployment. This ensures that code that has been successfully tested in the development environment is consistently deployed all the way to the production environment.

- Atomicity and consistency: Technically, a DAB is considered an indivisible unit. This means that during a deployment, either all defined resources are successfully updated, or, in the event of an error, the system remains in its previous stable state. This atomicity prevents drift between the code in the Git repository and the actual configuration in Databricks.

Outlook

Deployment is now automated and technically secure. But as our platform grows, how do we maintain a clear understanding of the data flows and ensure consistent data quality? In the upcoming sixth installment of our series, we will take an in-depth look at the topics Testing & Lineage.

In doing so, we demonstrate how we ensure transparency regarding data sources and use automated quality checks to build lasting trust in the data platform.

Stay tuned!